The Red Edge: What China’s Naval Autonomy Tells Us About the Future Fight

Intelligentized Warfare Is Already Afloat

China is no longer experimenting at the edges of naval autonomy. It is institutionalising it. Through its “intelligentization” doctrine, the PLA has set a 2027 goal to integrate AI across sensors, weapons, decision chains, and command networks. The People’s Liberation Army Navy (PLAN) sees intelligent systems as force multipliers that compress kill chains, saturate defenders, and challenge Western C2 architecture. In Chinese terms, this isn’t merely about tech; it’s about forming the tempo and structure of future war.

Programs like the PLA’s Joint Operations Command System, autonomous navigation trials in the South China Sea, and ongoing AI integration in logistics and surveillance platforms reveal a coherent plan. China’s Academy of Military Sciences and the Chinese Electronics Technology Group are deeply embedded in this change, signalling a state-industrial fusion approach to warfighting AI.

The push toward intelligentization represents both operational ambition and strategic-level pressure. The PLA knows it cannot match allied experience at sea, but it can shift the game by fielding unmanned systems at scale. That’s not a hypothetical future. It’s a force design decision already in motion.

Carrier-Sized Swarms and Robotic Motherships

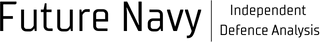

China’s New High-Tech Research Ship, the Zhu Hai Yun, is the most visible example of how China is approaching maritime autonomy, but it is far from an outlier. It sits within a wider set of capabilities that Beijing is testing and fielding at pace, each intended to expand strategic options rather than deliver direct combat power. These platforms are not optimised to fight in the conventional sense. Instead, they are intended to operate persistently, at scale, and below the threshold of armed conflict.

Zhu Hai Yun demonstrates a broader pattern in Chinese force development, where new platforms are introduced less for direct combat and more for the strategic options they enable. Operating below the threshold of armed conflict, such vessels support continuous presence and systems deployment in sensitive maritime regions, complicating attribution and response. This is capability development aimed as much at moulding the environment as at winning a fight.

The intent becomes clearer when the vessel is viewed as an autonomy platform rather than a conventional ship.

Zhu Hai Yun is best understood not as a ship, but as an autonomy management platform. Its value lies less in the hull itself and more in how the vessel is arranged to deploy, recover, and coordinate uncrewed systems at scale. The simplified profile schematic above highlights this architecture clearly: an extended, uninterrupted flight operations deck optimised for UAV handling; dedicated zones for uncrewed vehicle launch and recovery; a superstructure shaped around mission control and autonomy management rather than traditional bridge-centric command; and a communications mast prioritised for data exchange over line-of-sight navigation. This is not a combatant in the conventional sense, but a mobile node in a wider machine-led system, designed to generate mass, persistence, and decision speed without putting people forward. The strategic importance lies not in what Zhu Hai Yun carries, but in what it enables.

Alongside it, smaller systems like the JARI USV, the JARI-M multipurpose autonomous platform, and adaptive loitering munitions are entering service. These are not isolated prototypes: they are modular, scalable, and increasingly integrated into PLAN force structure. Paired with advances in datalinks, quantum navigation, and shore-based targeting support, these systems form a complex mesh for distributed lethality.

For comparison, Western equivalents such as the U.S. Navy’s Ghost Fleet Overlord and the Royal Navy’s XV Patrick Blackett have only recently begun sustained trials. The scale and pace of China’s fielding suggest a narrowing technology gap and a different appetite for operational risk. The difference is not conceptual ambition, but institutional tempo. Mass without decision speed is noise. Autonomy only becomes advantage when it compresses the threat evaluation and engagement cycle.

What Happens When the Opponent Skips the TEWA Loop?

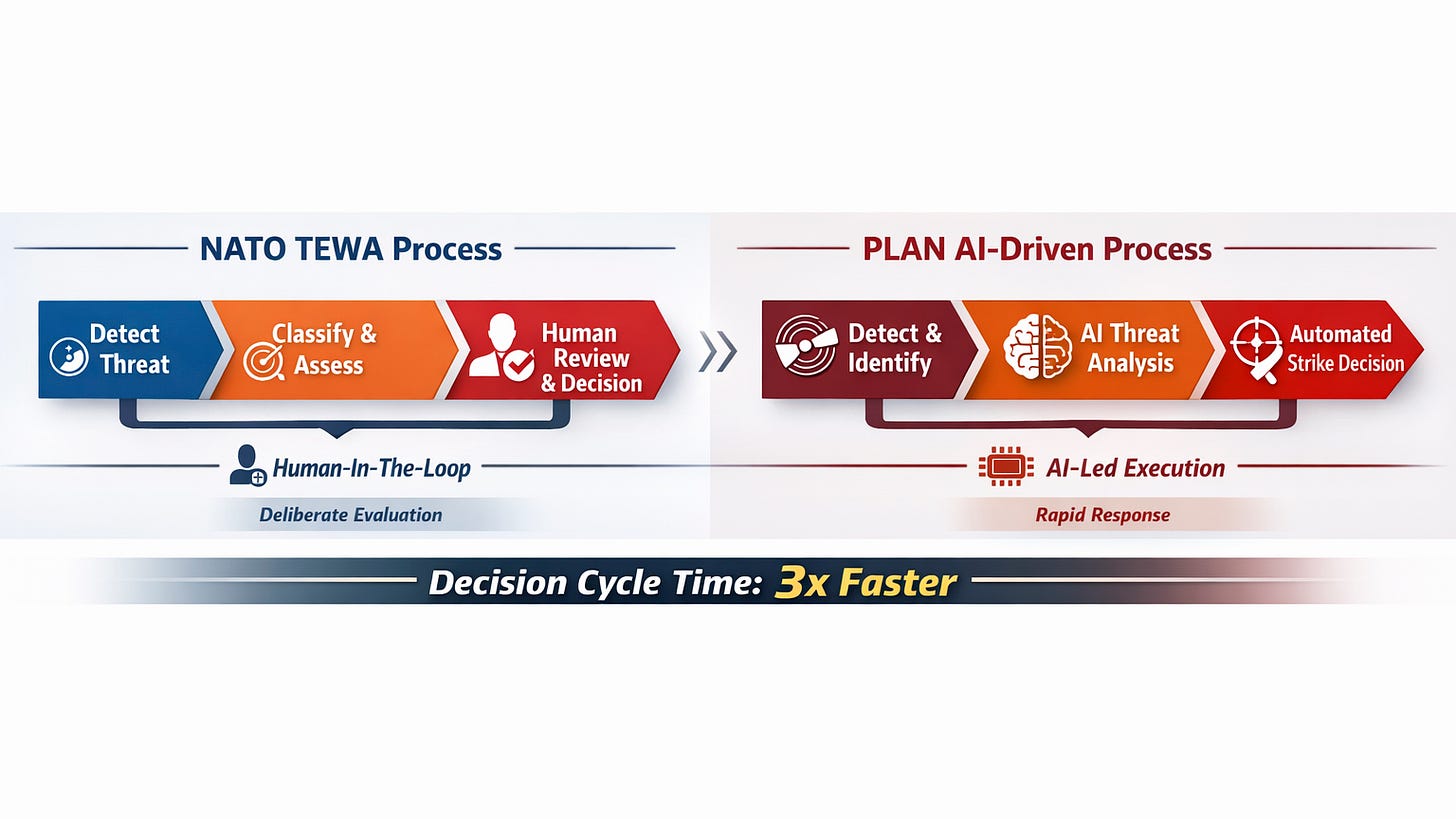

In a battlespace shaped by machine-generated mass, the decisive variable becomes not platform count, but decision tempo. The question is no longer whether a force can detect or reach a target, but whether it can evaluate, assign, and act before the opponent closes the loop. autonomy is used to generate mass and persistence, the decisive advantage shifts to decision speed. In a battlespace defined by uncrewed systems operating at scale, the limiting factor is no longer detection or reach, but how quickly a force can evaluate threats, assign effects, and act with confidence.

Western navies prize assurance, layered TEWA logic, and human-in-the-loop design. NATO exercises like Formidable Shield have tested AI agents for threat evaluation, but always with human override. The PLAN signals a higher tolerance for machine-led tempo and operational risk with faster, more machine-driven decision frameworks.

AI-enabled systems are being created to identify, prioritise, and potentially engage targets without pause for centralised command. In a Taiwan or South China Sea scenario, this could mean AI-guided swarms overwhelming sensors before allied operators can respond.

Decision velocity is no longer a by-product of combat power. It is combat power. Can NATO ships operate at machine tempo without impairing assurance? Will Western command frameworks tolerate losses due to slower response cycles? The fight isn’t just about platforms anymore — it’s about competing risk thresholds and command cultures.

As TEWA accelerates toward machine speed, the problem shifts from calculation to control. The central question is no longer whether systems can recommend the right action, but whether command structures, assurance frameworks, and human operators can trust, understand, and govern those recommendations in time to matter.

Impacts on Allied Force Design and C2

If TEWA is where machine speed meets combat decision-making, then command and control is where that speed must be absorbed, governed, and directed. The challenge is no longer connecting sensors to shooters, but structuring authority, trust, and information flow so that human commanders remain decisive rather than overwhelmed.

China’s trajectory places stress on allied assumptions. Project Overmatch, AUWB-MN, and the Royal Navy’s Maritime Integrated C2 program become more than capability upgrades — they become essential to retaining initiative.

The U.S. Navy’s decision to equip multiple carrier strike groups with prototype overmatch nodes reflects urgency. NATO’s Federated Mission Networking and the Digital Ocean initiative are racing to build joint networks capable of handling dynamic, cross-domain tasking.

The UK’s own commitments, including T26’s open combat systems, shared infrastructure for multi-domain data fusion, and greater investment in AI integration, are consistent with the UK’s commitments. But the leeway for error is shrinking. The competition is not only in sensors and weapons, but in networks, latency, and the ability to trust machine outputs in real time.

As command and control absorbs more machine-generated judgment, the question of assurance becomes unavoidable. C2 systems are no longer neutral conduits of information, but proactive participants in how risk is surfaced, constrained, or passed through. If commanders are to remain accountable at machine speed, they must be able to view not just what the system recommends, but how, why, and with what confidence. Assurance is no longer a compliance function; it is a command requirement.

Assurance: Governing Trust at Machine Speed

In competition with an entity that is willing to trade assurance for speed, the role of assurance becomes decisive. It is the mechanism that allows commanders to operate at machine speed without surrendering responsibility. In an AI-enabled force, assurance defines what can be trusted, when it can be trusted, and under what conditions human assessment must intervene. Without it, faster decision cycles simply accelerate uncertainty rather than control it.

Traditionally, assurance in defence has been treated as a pre-deployment activity: validation, testing, certification, and compliance conducted long before systems encounter operational pressure. That model breaks down in environments where algorithms learn, data shifts, and autonomous behaviours adapt in real time. In contrast to China’s apparent comfort with machine-led tempo and risk absorption, Western navies remain accountable for decisions made at speed. That accountability cannot be deferred to process or policy.

For naval commanders, assurance must therefore be visible and actionable at the point of command. AI-enabled systems need to expose confidence levels, uncertainty intervals, and decision rationale in ways which match how operators think and act under pressure. A recommendation that is delivered quickly but without context is not an advantage; it is a liability. Trust at machine speed depends on legibility, not just performance.

This places new demands on both system design and command culture. Assurance frameworks have to be embedded into combat management systems and C2 architectures, not bolted on as audit tools. Operators must be trained not only to use AI outputs, but to question them, override them, and understand their failure modes. Commanders, in turn, must retain clear authority over when machine judgment is accepted and when human decision prevails.

The implication for navies facing China’s autonomy model is practical rather than philosophical. Speed alone is not enough. Advantage will belong to forces that can combine autonomy with control, machine recommendation with assured command, and invention with accountability. That is not a technology problem. It is a force design and leadership problem.

Keeping Ahead: The RN’s Next Moves

For the Royal Navy, maintaining an edge in an autonomy-driven competition will depend less on matching China platform for platform, and more on how quickly autonomy, TEWA, command, and assurance are fused into a coherent operational system. The challenge is no longer proving that AI and uncrewed systems work, but combining them in ways that preserve command authority, accelerate decision-making, and remain credible under active operational pressure.

But keeping ahead demands more than experimentation. It requires red teaming the red edge. Understanding how the PLAN fights with autonomy, how it views risk, and how it deploys mass without people is now essential.

The Royal Navy’s task is therefore not to chase autonomy for its own sake, but to shape how it is used. Success will depend on accelerating experimentation, embedding assurance into command systems, and training commanders to operate confidently alongside machine judgment. In a competition defined by speed and scale, the RN’s advantage will come from integration, restraint, and the ability to turn autonomy into a controllable source of operational leverage.

Closing Thought

No single platform defines China’s approach to naval autonomy. Still, it is defined by the speed, scale, and confidence with which it is willing to integrate machines into the fabric of command. Vessels like Zhu Hai Yun are signals, not anomalies, pointing to a future in which decision tempo, persistence, and system-level coordination matter more than individual hulls or weapons.

For Western navies, the response cannot be to uncritically mirror that model. The competitive advantage lies elsewhere. It lies in the ability to combine autonomy with control, speed with judgment, and inventiveness with accountability.

In an AI-enabled battlespace, credibility is not judged by how fast machines can act, but by how confidently humans can command them.

The future fight will not be decided by who fields the most autonomous systems, but by who governs them best.